Activation Function#

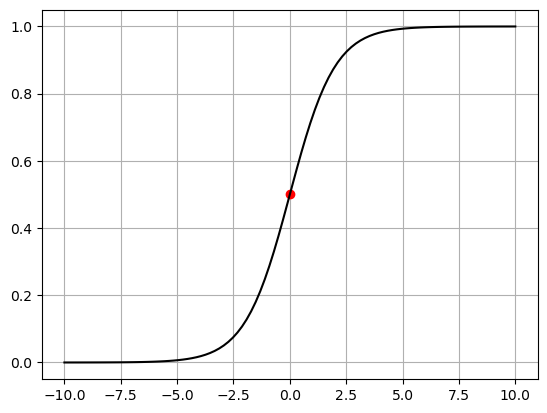

\[

\text{Sigmoid}(x)=\frac{1}{1+\exp(-x)}

\]

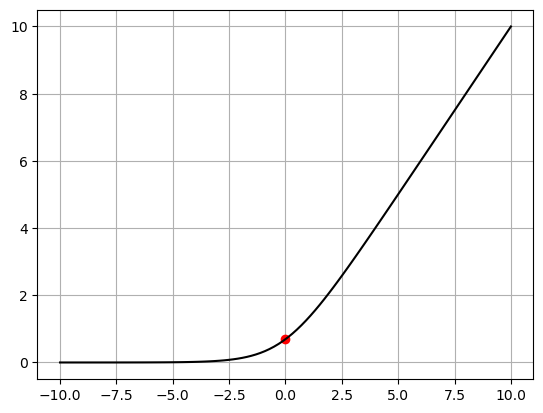

\[

\text{Softplus}(x)=\frac{1}{\beta}\times \log{\big(1+\exp{(\beta x})\big)}

\]

SoftPlus is a smooth approximation to the ReLU function and can be used to constrain the output of a machine to always be positive. And For numerical stability the implementation reverts to the linear function when \(\beta x>\text{threshold\}\)

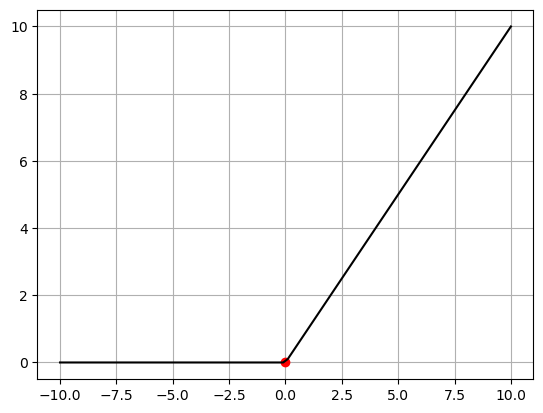

\[

\text{ReLU}(x)=\text{max}(0,x)=\frac{\rvert x\lvert+x}{2}

\]