Boundary Conditions & Ensembles#

1. The theoretic foundation#

1.1. Boundary Conditions#

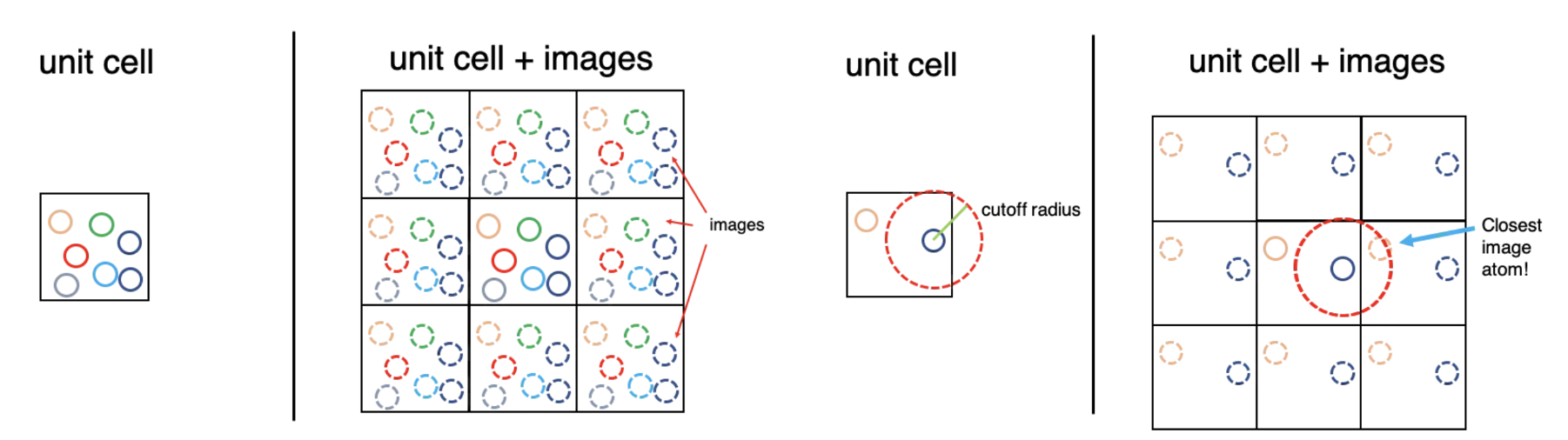

Due to the infinite size of the system, any atomistic simulation of macroscopic condensed matter can only cover a comparatively small detail of its atomic arrangement.

Periodic boundary conditions (PBCs) are a set of boundary conditions that are often chosen for approximating a large (infinite) system by using a small part called a unit cell.

During the simulation, each particle in the simulation only interacts with the closest image of the remaining particles. Which is called minimum image convention

1.2. Statistical mechanics in MD#

Two postulates for equilibrium statistical mechanics:

Microstates of equal energy are equally like

Time average is equivalent to ensemble average (“ergodicity”)

For a dynamical system defined by a state space \(\Omega\), a probability measure \(\mu\), and a measure-preserving transformation \(T:\Omega\rightarrow\Omega \), given a measurable function \(f\) :

The time average: \(\bar{f_t} = \displaystyle \lim_{T \to +\infty} \frac{1}{T} \int_0^T f(x(t))\, dt\)

The ensemble average: \(\langle f \rangle=\int_\Omega f(x)d\mu(x)\)

The Ergodic Hypothesis states that for an ergodic system: \(\bar{f_t}=\langle f\rangle\)

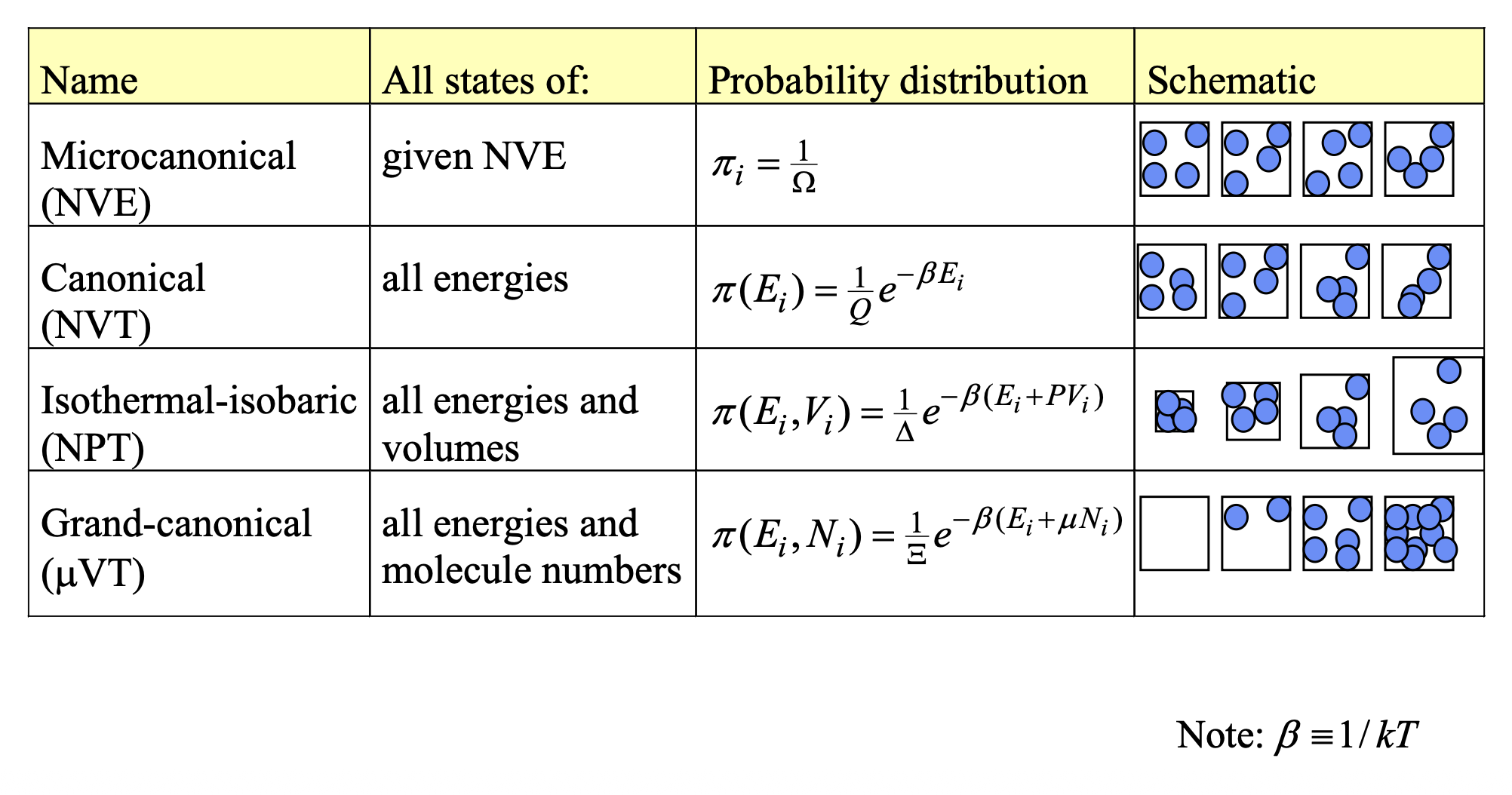

1.3. Ensembles#

A ensemble is a collection of microstates subject to at least one extrinsic constraint. (Extensive property is the property that scale with the environment)

Recall that the Boltzmann distribution:

\(p_i\) is the probability of the system in state \(i\)

\(\varepsilon_i\) is the energy of the state \(i\)

\(K_B\) is Boltzmann constant, \(T\) is the temperature

\(\mathcal{Z}\) is the normalization factor, \(\sum_i \exp(-\frac{\varepsilon_i}{K_BT})\)

2. The movement of atoms#

How the atoms interact with each other. Equation of motion:

Newtonian

Lagrangian

Hamiltonian

2.1. Newtonian motion: from force and acceleration perspective#

2.2. Lagrangian motion: From energy and path#

Lagrangian mechanics shifts the focus from forces and Cartesian coordinates to energy and “generalized coordinates.” It is based on the Principle of Least Action—the idea that a system will evolve along a trajectory that minimizes a quantity called the “action.”

we define the Lagrangian \((\mathcal{L})\) as the difference between the total kinetic energy (\(K\)) and total potential energy (\(V\)) of the system on a generalized coordinate \(q\) and generalized velocity \(\dot{q}\) .

[!NOTE]

Generalized coordinates are the absolute minimum number of independent variables required to completely define the configuration of a system, taking any physical constraints into account. They are conventionally denoted by the symbol \(q\).

\(f=3N-m\), the number of generalized coordinates \(q_1,q_2,...,q_f\) needed.

e.g. A Rigid Diatomic Molecule in MD (\(\{q_1,q_2, q_3,...,q_6\}\rightarrow \{x,y,z,\theta,\phi\}\))

2-D motion in central field \(\{q_1,q_2\} =\{r,\theta\}\)

The equations of motion are generated by solving the Euler-Lagrange equation for each degree of freedom:

\(q\): A generalized coordinate. This doesn’t have to be an \(x,y,z\) position; it could be an angle, a bond length, or any coordinate system that naturally describes the system.

\(\dot{q}\): The generalized velocity (the time derivative of the generalized coordinate \(q\)).

2.3. Hamiltonian motion: Phase space and Statistical mechanics#

While Newtonian and Lagrangian motion take \(q_i\) as fundamental variable and seeks for \(N\) \( 2^{nd}\) derivatives. The Hamiltonian seeks for \(2N\) first order derivative. It treats coordinate and its time derivative as independent variables.

First, we define the Hamiltonian (\(\mathcal{H}\)), which represents the total energy of the system (for standard conservative systems):

\(q\): The generalized coordinate.

\(p\): The generalized momentum, \(p =\frac{\partial \mathcal{L}}{\partial \dot{q}}\)

The motion of the system is described by Hamilton’s equations:

\(\dot{q}\) is the time derivative of the generalized coordinate.

\(\dot{p}\) is the time derivative of the generalized momentum \(\dot{p} = \frac{dp}{dt}\), which is equivalent to force.

[!TIP]

Legendre transform

Since \(q,\dot{q}\) is the variables of \(\mathcal{L}\), we want to \(p\) as independent variable, transform \(\dot{q}\rightarrow p\), \(p =\frac{\partial \mathcal{L}}{\partial \dot{q}}\)

\(H(q,p)=p\dot{q}-\mathcal{L}(q,\dot{q})\)

3. Time integration algorithms#

4. Temperature and Pressure dependent MD#

For standard MD, it assumes an isolated system with constant energy. However, real world simulation is under const temperature or pressure. So, how to artificially add or remove energy to keep the temperature or pressure constant is a problem.

The thermodynamic definition of temperature

\(\Omega\) is Num of microstates with given E

So, temperature defines the how much more disordered of a system given amount of energy.

high temperature: adding energy opens up few additional microstates.

low temperature: adding energy opens up many additional microstates

An Expression for the Temperature under NVE (microcanonical)

In the microcanonical ensemble, temperature is defined fundamentally through entropy S:

\(\Omega\) is the number of states or Density of states. Let \(\Phi(E)\) be the total phase-space volume inside the energy contour \(E\), \(N\) is the dimension of this phase space.

\(s\) is the area of the hyper surface.

Expression for the Temperature

4.1. Momentum temperature#

Kinetic energy: \(K(p^N)=\sum_i^N\frac{p_i^2}{2m}\)

Gradient: \(\nabla_p K=\sum_i^N(\frac{p_{ix}}{m}\hat{e}^{ix}+\frac{p_{iy}}{m}\hat{e}^{iy}+...+\frac{p_{id}}{m}\hat{e}^{id})\)

Laplacian: \(\nabla^2_pK=\nabla\cdot\nabla_pK=\sum_i^N(\frac{1}{m}+...+\frac{1}{m})=\frac{Nd}{m}\)

Temperature: \(kT=\frac{|\nabla_p K|^2}{\nabla_p^2 K}=\frac{m}{Nd}\times\sum_i^N((\frac{p_{ix}}{m})^2+(\frac{p_{iy}}{m})^2+...+(\frac{p_{id}}{m})^2)=\frac{1}{Nd}\sum_i^N\frac{p_{i}^2}{m}\)

4.2. Configurational Temperature#

Potential energy: \(U(r^N)\)

Gradient: \(\nabla_r U=\sum_i^N\frac{\partial U}{\partial r_{ix}}\hat{e_{ix}}+...+\frac{\partial U}{\partial r_{id}}\hat{e_{id}}=-\sum_i^N(F_{ix}\hat{e_{ix}}+...+F_{id}\hat{e_{id}})\)

Laplacian: \(\nabla^2_rU=\nabla\cdot\nabla_rU=-\sum_i^N(\frac{\partial F_{ix}}{\partial r_{ix}}+...+\frac{\partial F_{iy}}{\partial r_{iy}})\)

Temperature: \(kT=\frac{|\nabla_r U|^2}{\nabla_r^2 U}=-\frac{\sum_i^N \hat{F_i}^2}{\sum_i^N(\frac{\partial F_{ix}}{\partial r_{ix}}+...+\frac{\partial F_{iy}}{\partial r_{iy}})}\)

5. Types of Thermostats#

In canonical ensemble, the \(N, V, T\) are fixed. The temperature of the system can be connected to the kinetic energy by the momentum temperature:

Although the temperature and the average kinetic energy are fixed, the instantaneous kinetic energy fluctuates and with it the velocities of the particles. The instantaneous kinetic energy is often used to define the instantaneous temperature \(T_\text{in}\) by:

Because the temperature fluctuates in the finite systems as we introduced above, so the goal of thermostat is to ensure the time average of \(T(t)\) is matched with target temperature \(T_\text{target}\)

5.1. Velocity Rescaling (Simple but Flawed)#

The simplest way to control temperature is to periodically multiply all particle velocities by a scaling factor.

And the typical example is Berendsen and Andersen Thermostat.

Berendsen Thermostat#

The Berendsen thermostat weakly couples the system to an external heat bath. It forces the temperature to exponentially decay towards the target value.

\(\tau\): The coupling time constant (relaxation time). A small \(\tau\) means tight coupling (faster temperature adjustment), while a large \(\tau\) means weak coupling.

5.2. Stochastic Thermostat#

Andersen Thermostat#

The Andersen thermostat introduces stochastic (random) collisions with a virtual heat bath. Periodically, a particle is selected at random, and its velocity is reassigned from a Maxwell-Boltzmann distribution corresponding to the target temperature.

The probability of a particle undergoing a collision in a discrete time step \(\Delta t\) is governed by a collision frequency:

Langevin Thermostat#

The equation for Langevin dynamics:

\(\nabla U\) is the conservative force.

\(\gamma\): The collision frequency or friction coefficient.

\(v_i\): Velocity of the particle.

\(R_i\): The random (Gaussian) force.

Velocity-Verlet algorithm for Langevin thermostats $$

\mathcal{L}\text{Nose}=\sum{i=1}^N\frac{m}{2}\dot{r’}^2s^2-V®+\frac{Q}{2}\dot{s’}^2-gK_BT\ln s’ $$

\(s\): The extended, dimensionless variable representing the artificial heat bath.

\(\dot{r}\): The time derivative of the position vector of particle \(i\) (virtual velocity).

\(\dot{s}\) The time derivative of the heat bath variable \(s\)

\(g\) is the number of independent degrees of freedom in the physical system. For \(N\) particles in 3D space with fixed total momentum, \(g=3N−3\).

\(Q\) is the thermal inertia or “effective mass”, which determines the time scale on which the extended variable acts.

However, the Nosé Lagrangian perfectly generates the canonical ensemble, it operates in “virtual time” because the time step scales with the fluctuating variable \(s\), the extended method aims to return the equations to “real time” and real momenta. $$

H_{NH} = \sum_{i=1}^{N} \frac{|\mathbf{p}i|^2}{2m_i} + U(\mathbf{r}) + \frac{1}{2} Q \zeta^2 + N_f k_B T{target} \int_0^t \zeta(t’) dt’ $$

\(s\) can be treated as a scaling factor of the time step

So, the relation between real time and virtual times is:

u® = \begin{cases} u® - u(r_c) & r \leq r_c \ 0 & r > r_c \end{cases} $$ Shifted-force potentials

Routinely used in MD

5.3. Ewald Summation#

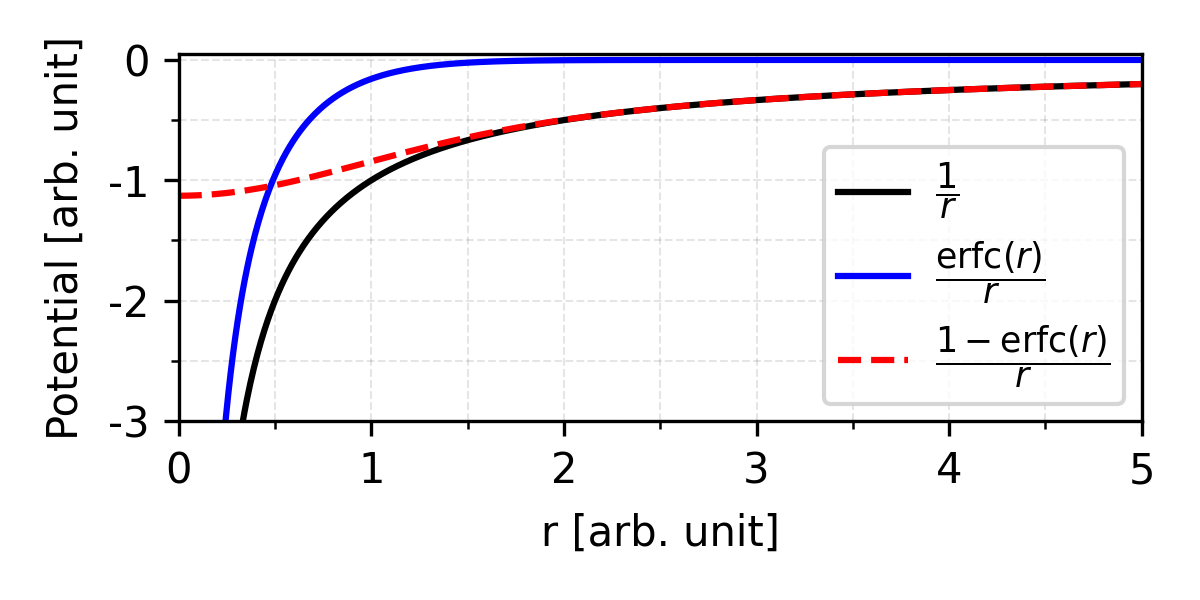

If we need to calculate the total electrostatic energy of a periodic system of point charges, the direct approach is to sum the coulomb interactions for pair-wise charges. However, recall that bulk system are usually simulated with periodic boundary conditions by cutoff. Due to minimum image convention, it only interact with nearest image.

\(L\) is the lattice vector of \(L^{th}\) unit cells.

Due to the slow convergence of \(\frac{1}{r}\), and the number of interacting particles in a 3D space grows as \(r^2\). The potential will increase with \(U_\text{total}\propto \int_0^\infty rdr\rightarrow \infty\) (for single type shell charges).

But in real crystals they are conditionally converge, because the summation of the repulsive term and attractive term, which yields a mathematically undefined \(\infty-\infty\) situation, so, directly sum of \(1/r\) is conditionally convergent, but that means the final result depends entirely on the order of summation.

Option A: \(\sum^n_0 (e-e) + (e-e)+ ...\rightarrow 0\)

Option B: \(\sum_0^n (e+e-e) + (e+e-e)+ ...\rightarrow +\infty\)

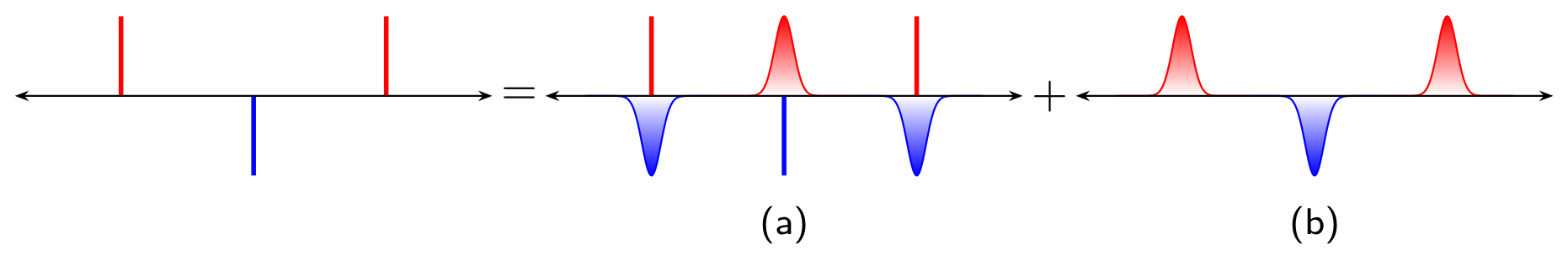

So, to deal with slow convergence, Ewald propose to calculate the potential splitter into a short-range part calculated in real space, and a long-range part calculated in reciprocal (Fourier) space.

\(f(\lambda(r))\) is a fast decay function

\(\lambda\) is the parameter that determines how fast the function decays.

The main idea of Ewald summation is to separate long-range interactions by screening at the real space. By wrapping every point charge in a diffuse cloud of opposite charge. Imaging if we are standing far away from this atom, the positive point charge and the negative Gaussian cloud perfectly cancel each other out. So, we only need to calculate the pair wise potential in the cut off range.

Ewald parameter \(\alpha\) that determines how wide or tight the Gaussian cloud is.

\(|r-r_0|\) is the distance from the center of the point charge.

\(q\) is the charge of central particle.

At the same time, we need to compensate such fake screening clouds, we superimpose a positive compensating cloud in the exact same location to cancel the fake one out.

In Ewald summation, complementary error function is chosen as the decay function:

Real space summation

Now we can calculate the short range potential caused by such smooth charge distribution:

Reciprocal space summation

Due to the periodicity of crystals, the particles interact with compensated cloud should follow the periodicity. The standard way to solve the math of periodic waves is to use a Fourier transform to switch from real space into reciprocal (momentum) space. And the Fourier transform of a Gaussian is another Gaussian.

[!TIP]

Fourier transformation of Gaussian, for \(1D\) Gaussian \(g(x)=e^{-\alpha x^2}\):

\[\begin{split} \mathcal{F}(g(k))=A\int_{-\infty}^{+\infty} e^{-\alpha x^2}e^{-ikx}dx=Ae^{-k^2/4\alpha}\int_{-\infty}^{+\infty} e^{-\alpha(x+\frac{ik}{2\alpha})^2}dx\\ =A\sqrt{\frac{\pi}{\alpha}}e^{-k^2/4\alpha}\Leftarrow\int_{-\infty}^{+\infty}e^{-\alpha(z+b)^2}dz=\sqrt{\frac{\pi}{\alpha}}\\ =A\sqrt{\frac{\pi}{\alpha}}^ne^{-(k_1^2+...+k_n^2)/4\alpha} \end{split}\]

Here the normalization factor \(A=\frac{1}{\sqrt{\Omega}}\), \(\Omega\) is the volume of the \(n\) dimension space. Proof bellow:

The let’s first get the distribution of the compensation smooth distribution.

Recall that \(e^{ikL}=1\) and \(\sum_Le^{ikL}=N\delta_{k,L}\), L is lattice vector.

\(\delta_{k,G} = 1\) if \(k_j\) and \(L_j\) share same index. And orthogonal if share different index \(j\)

\(N\) and \(V\) is the number and volume of unit cells, respectively.

\(\Omega\) is volume of the periodic materials: \(\Omega=NV\).

The charge and electrostatic potential are related by Poisson’s equation in real space

Pay attention, here we use Gaussian (cgs) units instead of SI units (\(\AA\)), if we use SI units \(\phi(r)=\frac{\rho(r)}{\epsilon_0}\)

Now, we substitute the \(\rho\) with the electrostatic potential:

And we get the potential:

Self correction term

This is a constant correction term applied to remove the interaction of a charge’s compensating cloud with the charge itself, which was inherently included in the reciprocal sum.

6. Radial distribution function (RDF)#

\(g(r)=1\) as \(r>r_\text{cutoff}\), meaning the structure becomes uniform at long distances.

\(g(r)=0\) as \(r=0\) due the core repulsion between atoms.